Using LEMP stack on Ubuntu to host a Laravel app

May 16, 2024

Over the last couple of years, NGINX (part of the LEMP stack) has been significantly gaining popularity until it finally surpassed its main rival in market share, Apache (part of the LAMP stack), making it currently the most used web server. The LEMP stack, which we will learn to configure in this article, consists of 4 parts: a Linux operating system (or a Linux-based distribution, in this case, Ubuntu), a NGINX web server, a MySQL database, and PHP. Beyond that, in order to have a fully functional Laravel app, we are going to install and configure Composer, Supervisor, and an SSL certificate. Along the way, we are also going to perform basic UFW and cron setups, and I'll explain how we can clone and host an existing project from GitHub. In the end, we are going to create a small shell script with which we will run a set of commands that are useful when deploying updates to the codebase located on the server.

I'll be installing everything on a very basic development server, which runs on Ubuntu 22.04 LTS, and has 1 CPU core, and 1 GB of RAM. In order to perform the necessary steps, you will need to SSH into your server as a root user (although it is recommended that you don't use a root user actually) or as any other user with sudo privileges. For that purpose, I'll be using Git Bash on Windows 10.

A little note about Ubuntu versions before we proceed. When I first started writing the article, the latest Ubuntu LTS version was 22.04, but in the meantime, a new version of Ubuntu was released, 24.04 LTS. After finishing the article, I've created a new server with a 24.04 version, went through the whole write-up again, and tested each command on that new server. So the instructions you are about to go through should execute about the same on both versions.

I won't go into too many details when it comes to accessing your server for the first time, but usually, if using SSH keys (if you are not using SSH keys you will be prompted for password, but generally using the keys is a more secure and recommended way), when creating a new server instance your hosting provider will allow you upload a public SSH key (or use an existing one which you assigned to your account) which will be added to the authorized keys on the server. Later on, when trying to SSH into the server, that key will be checked against your private SSH key on your computer, and you will be allowed to access the server's terminal.

As you may already suspect, this article will cover quite a few topics, so take your time and feel free to take breaks between chapters.

Now that we have a nice overview of the steps we are going to perform, it's time to get our hands dirty.

Configuring UFW, NGINX, MySQL, and PHP

First, SSH into the server as a user with sudo privileges as you usually would, by using a public IP address and a username. For example, like so:

ssh [email protected]

Or if you have multiple SSH keys, specify the key:

ssh -i /path/to/private/key.pem [email protected]

Next, we are going to update our server's package index:

sudo apt update

Now we are going to take a look at our UFW settings. UFW stands for Uncomplicated Firewall. We are going to use it to allow access to our server only through SSH, HTTP, and HTTPS (meaning ports 22, 80, and 443, respectively). We can check the current status of the firewall by typing this command:

sudo ufw status

Don't worry if the firewall isn't active yet; we are going to enable it in just a sec. We can also use another command to see a list of the available applications (basically preconfigured profiles that can be used to allow different types of traffic):

sudo ufw app list

Currently, on that list, you might see only an OpenSSH profile, which is used to allow SSH connections to the server. Before we proceed, let's specifically allow SSH access through the firewall with this command (even though the UFW isn't active yet, we are going to need this profile enabled later on):

sudo ufw allow ssh

Great, now let's install NGINX (when prompted, press Y and ENTER to continue):

sudo apt install nginx

Confirm that everything went as planned by checking the status:

service nginx status

If the service is active and running, we are good to proceed. Now, since we won't be using SSL just yet, we are going to enable just HTTP traffic to our server with this command:

sudo ufw allow 'Nginx HTTP'

Finally, we can enable UFW like so (press y when prompted):

sudo ufw enable

When checking the UFW status again (sudo ufw status), you might see something like this:

Status: active

To Action From

-- ------ ----

22/tcp ALLOW Anywhere

Nginx HTTP ALLOW Anywhere

22/tcp (v6) ALLOW Anywhere (v6)

Nginx HTTP (v6) ALLOW Anywhere (v6)

This just means that currently only SSH and HTTP traffic (on default ports 22, and 80) is allowed. Attempts to access your server on any other ports will be prevented by the firewall.

If you configured everything properly, you should be able to see a default NGINX page when visiting your server's public IP address (which we also used with SSH), for example:

http://203.0.113.0

Nice! Now let's install MySQL (when prompted, press Y and ENTER to continue).

sudo apt install mysql-server

At the time of writing this article, the latest version of MySQL was 8.0.36. You can check if the service is running with this command:

sudo systemctl status mysql

After we have confirmed that everything is in order, we should run a MySQL security script that will perform certain adjustments to our installation, making it more secure. We can start by running this command:

sudo mysql_secure_installation

After running the command, you will be prompted if you would like to enable a VALIDATE PASSWORD component. If enabled, any password you set will need to satisfy certain conditions, depending on the level you choose. That basically means that you will be forced to use stronger passwords when setting up your databases. Which is, of course, a good thing; you just need to make sure your passwords are complex enough. Even though this step isn't required, we are going to type Y and see what's next. After you decide to enable the component, you will be asked to select a level of password complexity:

There are three levels of password validation policy:

LOW Length >= 8

MEDIUM Length >= 8, numeric, mixed case, and special characters

STRONG Length >= 8, numeric, mixed case, special characters and dictionary file

Please enter 0 = LOW, 1 = MEDIUM and 2 = STRONG:

We are going to use the strongest level. To proceed, type 2. After doing so, you will see that auth_socket authentication was set by default. Ignore this part for a second; I'll elaborate on it soon. Continuing with the setup, you will be asked to remove anonymous users, disallow root remote login, remove test databases, and reload the privilege tables. To each of those, you can respond with Y and ENTER. After those 4 additional confirmations, you should be all set.

Now, let's go back a bit to that auth_socket authentication message from a couple of moments before. The message stated something like this:

Skipping password set for root as authentication with auth_socket is used by default.

If you would like to use password authentication instead, this can be done with the "ALTER_USER" command.

See https://dev.mysql.com/doc/refman/8.0/en/alter-user.html#alter-user-password-management for more information.

What this means is that if you accessed your server as one of the users with sudo privileges, you won't have to type a password when wanting to access MySQL as its main/administrative (also called root) user. So, just to clarify, there is a system's (Ubuntu) root user and there is a MySQL's root user, and you are able to login as that MySQL's root user only if you have the sudo privileges of the system's root user. I hope that makes sense. The takeaway is that this setup makes access to MySQL more secure since only the system's root users can perform administrative tasks of the highest order. This also means that we won't be able to access MySQL databases from our Laravel app as MySQL's root user, but we will have to create other dedicated users with lesser privileges for each database.

If everything went well with a secure installation, you would be able to access MySQL by typing:

sudo mysql

There, you can check if everything is in order by performing a simple query to list all databases:

SHOW DATABASES;

To leave the MySQL shell, just type:

exit

Now, using auth_socket for authentication is a default for MySQL and a more secure option. But if you really want to specify a password for MySQL's root user, you can do so with the following set of commands. First, login to MySQL's shell again as the root user:

sudo mysql

Now, I'm going to copy all of the commands that should be executed in MySQL in a single block, so just keep in mind that they should be typed in the terminal one by one, row by row.

SELECT user,authentication_string,plugin,host FROM mysql.user;

ALTER USER 'root'@'localhost' IDENTIFIED WITH caching_sha2_password by 'G%xYT5yEg^YBTgY2nK&fZ7ka';

FLUSH PRIVILEGES;

SELECT user,authentication_string,plugin,host FROM mysql.user;

exit;

What happened above was that we first checked the authentication method for the root user, and we confirmed that it is currently set to auth_socket:

| root | auth_socket | localhost |

Then we set a password for the MySQL's root user using caching_sha2_password, and there is an example of a random password I just generated (G%xYT5yEg^YBTgY2nK&fZ7ka) to show you the type of password strength that is expected, based on the level we have chosen when we were running the secure installation script. Make sure to generate a password of your own. After that, we are flushing the privileges and running the same select query to confirm that the root user now indeed has a password set:

root | $A$005SOMESALTANDPASSWORD | caching_sha2_password | localhost |

In the end, we need to restart MySQL for the changes to take place:

sudo service mysql restart

Now, when it comes to MySQL 8 and some older PHP versions (before 7.4.4), default drivers that allow PHP to communicate with MySQL don't support the caching_sha2_password authentication method, so you might need to use mysql_native_password method when setting the password (more about that in the PHP docs), like so:

ALTER USER 'root'@'localhost' IDENTIFIED WITH mysql_native_password by 'G%xYT5yEg^YBTgY2nK&fZ7ka';

In case you ever want to revert the root user back to auth_socket, you would need to login as the root user first, but this time using the password you set previously (note the -p flag for password):

mysql -u root -p

When you login with the password, run these commands again, row by row:

SELECT user,authentication_string,plugin,host FROM mysql.user;

ALTER USER 'root'@'localhost' IDENTIFIED WITH auth_socket;

FLUSH PRIVILEGES;

SELECT user,authentication_string,plugin,host FROM mysql.user;

exit;

Again, restart MySQL:

sudo service mysql restart

Whew, that's enough regarding MySQL for now. Let's proceed with installing PHP.

NGINX needs an additional program that will act as a mediator between the web server and the PHP interpreter. That program is PHP-FPM (PHP FastCGI Process Manager). This just means that all PHP requests will be passed from NGINX to PHP-FPM for processing. If you want to learn more about PHP-FPM, you can refer to my other article, where I'm discussing the most important settings: A deeper dive into optimal PHP-FPM settings.

We will also need to install some additional modules and extensions that are needed for PHP to communicate with MySQL and to satisfy some other requirements for Laravel to function properly.

Ubuntu 22.04 comes with PHP 8.1 as a default version that can be installed, so if we want to install any other version of PHP, we will need to pull some other repositories first, which will offer us a variety of choices. You can achieve that with these two commands:

sudo apt install software-properties-common

sudo add-apt-repository ppa:ondrej/php

For the first command, you might get a notification that everything is already up-to-date, and that's fine. For the second command, press ENTER when prompted. Keep in mind that if using Ubuntu '24.04', the default PHP version is 8.3, so if you plan on installing that version only, there is no need to pull additional repositories.

Now let's install PHP-FPM (we will be using PHP 8.3) and the extensions (press Y and ENTER when prompted):

sudo apt install php8.3-fpm php8.3-mysql php8.3-curl php8.3-mbstring php8.3-xml

Now, if you run:

php -v

You should get a confirmation that PHP 8.3 was installed. Bare in mind, that if you want to install some other PHP version that you will need to specify that explicitly in the command, for PHP-FPM and each extension, if you don't do that you might end up with a default 8.1 (or 8.3, if using Ubuntu 24.04) version. So, for example, if we wanted to install PHP 7.4, the command would look like this:

sudo apt install php7.4-fpm php7.4-mysql php7.4-curl php7.4-mbstring php7.4-xml

That's it for this chapter. We installed the core of the LEMP stack, now let's see what else we need to install and configure to get our app running.

Configuring Composer, Database, and Laravel

In order to manage PHP packages in our project, we are going to need to install Composer. Composer has a few dependencies, most of which we already installed or are already available in Ubuntu (git, curl, php-cli). What's left is to install unzip to extract zipped archives. Let's do that now:

sudo apt install unzip

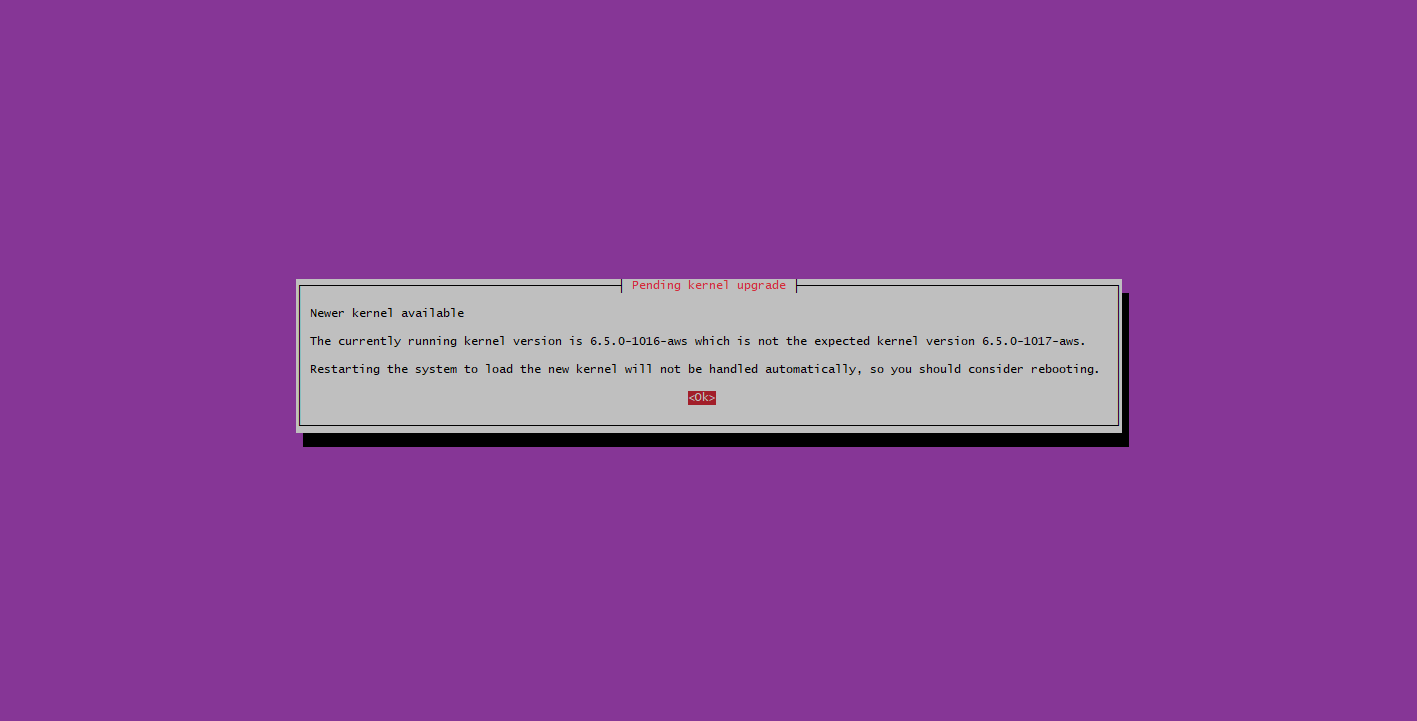

By the way, if you ever get a kernel upgrade screen followed by a services restart screen during this process, just switch to Ok and type ENTER:

After that, you can reboot the system with this command:

sudo reboot

Note that after executing this command, you will be logged out of the session, and you should wait a minute or so before attempting to SSH to the server again, giving it time to reboot.

Now, let's install composer (you can copy the whole command and run all of the lines at once; there is no need to go row by row):

curl -sS https://getcomposer.org/installer | php &&

sudo mv composer.phar /usr/local/bin/composer &&

chmod +x /usr/local/bin/composer

Note that there is a more secure way to install Composer by verifying that the installer is not corrupted. You can refer to the Command-line installation section on the Composer's download page on how to achieve that.

If everything went well, Composer was installed, and we can confirm that by typing:

composer -v

Since we only have one PHP version, Composer will use that one when performing any actions, but in case you end up installing multiple versions of PHP and PHP CLI, you can switch the currently active PHP CLI version by using this command:

sudo update-alternatives --config php

When running the command above, you might end up with something like this:

There are 2 choices for the alternative php (providing /usr/bin/php).

Selection Path Priority Status

------------------------------------------------------------

* 0 /usr/bin/php8.3 83 auto mode

1 /usr/bin/php7.4 74 manual mode

2 /usr/bin/php8.3 83 manual mode

Press <enter> to keep the current choice[*], or type selection number: 1

update-alternatives: using /usr/bin/php7.4 to provide /usr/bin/php (php) in manual mode

Here, I just typed 1 then ENTER to switch to PHP CLI 7.4. You can revert the changes with the same command. And if you now type php -v again, you should see the updated version.

OK, great, we installed the Composer, now let's go back to MySQL for a few moments. If you remember, we said that we were going to need to create a separate user with lesser privileges and a separate database for our Laravel app. First, login as root as we did previously:

sudo mysql

Then create a new database named laravel_database like this:

CREATE DATABASE laravel_database;

In the next step, we are going to create a new user, laravel_user, and set a password for him. Again, please remember that in order for the password to be considered valid, it has to be of the highest level of complexity, as specified in the secure MySQL installation we performed previously. Also, again, make sure that you generate a password of your own:

CREATE USER 'laravel_user'@'%' IDENTIFIED WITH caching_sha2_password BY '!j1SehLN9FMurvtkcs9xp&R5';

Now, we are going to give full access to the laravel_user for the laravel_database we just created (but only to that database):

GRANT ALL ON laravel_database.* TO 'laravel_user'@'%';

That's it. You can type exit to abort the session of the root user and login as our newly created user, using the password we just set (paste the password when prompted):

mysql -u laravel_user -p

If you type:

SHOW DATABASES;

You will see our new database listed. You can exit from MySQL again. It's finally time to clone our existing Laravel project to the server and configure it. Since we installed Composer, you could also create a new Laravel project directly on the server, but it's much more likely that you will be in a situation where you will need to clone an existing project from GitHub.

Before that, let's check our existing folder structure. Currently, the /var/www/html folder already exists, and inside of it there is just one HTML page (index.nginx-debian.html), which is the default NGINX page we recently saw when we visited our server's public IP address. We can remove the whole html folder and create a new folder that will hold the Laravel project. Go to the /var/www folder first:

cd /var/www

and delete the html folder (and its contents) like so:

sudo rm -r html

Then create a new empty folder named laravel:

sudo mkdir laravel

Then go inside that folder:

cd laravel

and clone the project from GitHub into the current laravel folder like so:

sudo git clone https://{PERSONAL_ACCESS_TOKEN}@github.com/goran-popovic/laravel.git .

Let me explain a bit more what is going on in the command above. There are numerous ways of cloning a GitHub repo, but in this case, I'm using an HTTPS URL of the private repo with a personal access token to authenticate myself. You can see that my username is goran-popovic, and the name of the repo is laravel. What's left is the token. You can check out GitHub docs on how to create a personal access token and which alternatives are available. In general, you would go to your Account settings > Developer settings and there create a fine-grained or a classic token, which you would paste before the @github.com part of the URL. Your token might start with ghp_ or github_pat_ depending of what token type you have chosen.

For security reasons it's not safe to run Composer as the root user, so we first need to change the ownership of files and folders in our current directory (laravel). I will set my current (non-root, but with sudo privileges) user as the owner and make them accessible to the webserver's (NGINX) group (www-data):

sudo chown -R $USER:www-data .

Then we can install the necessary composer packages:

composer install --optimize-autoloader --no-dev

Next, we also need to give the necessary permissions to the owner (our user) and the webserver (www-data) for all the files and directories in the project:

sudo find . -type d -exec chmod 775 {} \;

sudo find . -type f -exec chmod 664 {} \;

And lastly, we need to give the webserver the rights to read and write to the storage and bootstrap/cache folders:

sudo chgrp -R www-data storage bootstrap/cache

sudo chmod -R ug+rwx storage bootstrap/cache

We can proceed with the Laravel-specific setup that might be already familiar to you. First, we need to copy the example .env file:

cp .env.example .env

Then we can edit it:

nano .env

The most important details here to provide are those related to the database setup, like the database name, username, and password, all of which we created previously. Also, for the APP_URL environment variable, you can add your public IP address for now. We are going to revisit that later, when we get to the SSL certificate section. I'm going to set APP_ENV to development and APP_DEBUG to true in this example, but you can set those to production and false if you want, depending on how you would like to use the app later on.

APP_NAME=Laravel

APP_ENV=development

APP_KEY=

APP_DEBUG=true

APP_TIMEZONE=UTC

APP_URL=http://203.0.113.0

DB_CONNECTION=mysql

DB_HOST=127.0.0.1

DB_PORT=3306

DB_DATABASE=laravel_database

DB_USERNAME=laravel_user

DB_PASSWORD=!j1SehLN9FMurvtkcs9xp&R5

Great! Now, we just need to run the artisan command to generate an app key:

php artisan key:generate

And another command to run the migrations (when prompted, select Yes if you set the APP_ENV to production):

php artisan migrate

Awesome! By now, we should have successfully configured our Laravel app. In the next chapter, we are going back to NGINX, for which we need to perform some additional adjustments, and we are also going to install an SSL certificate.

Revisiting NGINX and installing SSL

Currently, if you visit your public IP address (we used http://203.0.113.0 as an example), you will notice that a 404 (not found) response is being returned. In order for our Laravel app to be accessible through our public IP address, we are going to need to instruct NGINX on how the app should be served. That can be achieved by using configuration files. Let's create one:

sudo nano /etc/nginx/sites-available/laravel.conf

What should we add to this file? An example from Laravel docs provides a good starting point. We are just going to alter that file a bit to fit our needs, so the final version will look like this:

server {

listen 80;

listen [::]:80;

server_name 203.0.113.0;

root /var/www/laravel/public;

add_header X-Frame-Options "SAMEORIGIN";

add_header X-Content-Type-Options "nosniff";

index index.php;

charset utf-8;

location / {

try_files $uri $uri/ /index.php?$query_string;

}

location = /favicon.ico { access_log off; log_not_found off; }

location = /robots.txt { access_log off; log_not_found off; }

error_page 404 /index.php;

location ~ \.php$ {

fastcgi_pass unix:/var/run/php/php8.3-fpm.sock;

fastcgi_param SCRIPT_FILENAME $realpath_root$fastcgi_script_name;

include fastcgi_params;

}

location ~ /\.(?!well-known).* {

deny all;

}

}

The most important things to note here are that NGINX will listen for connections on the default HTTP port 80, the server_name defines an IP address or a domain from which the app will be accessible, and the root defines the document root where the main file, which will process the incoming requests, is stored (in this case, that file is index.php, located in the public folder, which is a default for Laravel apps). Also note that we specified the location of the socket file (php8.3-fpm.sock), which declares what socket is associated with which version of PHP-FPM. If you were using some other version, you would specify it similarly, for example: php8.1-fpm.sock. To save and close the file, just press CTRL+X and then Y and ENTER to confirm.

For this configuration to take effect, we need to create a symbolic link from the sites-available to the sites-enabled directory, like this:

sudo ln -s /etc/nginx/sites-available/laravel.conf /etc/nginx/sites-enabled/

While there, we can also unlink the default configuration that we used when we first installed NGINX:

sudo unlink /etc/nginx/sites-enabled/default

Next, we can test the configuration for any syntax errors by running:

sudo nginx -t

Finally, we need to reload NGINX for the changes to be applied:

sudo systemctl reload nginx

If everything went well, you should be able to access your Laravel app on the public IP address you specified.

Great job! You may have noticed a Not secure warning in the address bar. That is because we haven't installed an SSL certificate yet. Before we do that, in order to install an SSL certificate, you are going to need a domain name pointed at your server, like example.com. If you don't have a domain name yet, that's fine; you can just test things as they are, using your public IP address, and come back to the SSL section when you are ready to present your website to a wider audience.

We are going to use Let’s Encrypt (Certificate Authority) to obtain a free SSL certificate, which will allow us to enable encrypted HTTPS traffic on our server. Let’s Encrypt uses a piece of software named Certbot, which should automate most of the tasks regarding the process of obtaining an SSL certificate as well as automatic renewal.

To install Certbot, first we need to install snap:

sudo snap install core; sudo snap refresh core

Then we can install Certbot:

sudo snap install --classic certbot

In the end, we will link the certbot command from the snap install directory to our path, so we will be able to run it more easily by just typing certbot.

sudo ln -s /snap/bin/certbot /usr/bin/certbot

Before we are able to generate a certificate, we are going to need to make some adjustments to our existing configuration. First, if you remember, we enabled just HTTP traffic on port 80 using UFW. Now we need to enable HTTPS on port 443 as well. We can do that by running this command:

sudo ufw allow 'Nginx Full'

Then delete the previous profile:

sudo ufw delete allow 'Nginx HTTP'

and check the status:

sudo ufw status

Now we should only have 2 profiles active: Nginx Full and 22/tcp for SSH access.

Next, we need to edit our NGINX config file:

sudo nano /etc/nginx/sites-available/laravel.conf

and replace the public IP address with a domain name. So instead of:

server_name 203.0.113.0;

use:

server_name example.com;

Then perform the 2 commands to verify the config file and to reload NGINX (one by one):

sudo nginx -t

sudo systemctl reload nginx

Then edit Laravel's .env file:

sudo nano /var/www/laravel/.env

and replace again the HTTP version of the APP_URL variable that uses a public IP address http://203.0.113.0 with a HTTPS version of the URL that uses a domain name https://example.com. The end result would look something like this:

APP_URL=https://example.com

Finally, cache the new configuration by using:

cd /var/www/laravel && php artisan config:cache

Now we are ready to install the SSL certificate. Run this command to obtain a certificate for your domain (enter email address when prompted, accept the terms, and choose if you want to share your email address):

sudo certbot --nginx -d example.com

If everything went well, when you visit your domain, you should be able to confirm that the connection is now secure.

Certificates are valid only for 90 days and need to be renewed before that time expires. Certbot should renew those certificates automatically for us. It will add a timer that will run twice a day and renew any certificates that are within 30 days of expiration. You can always check the status of the timer by running this command:

sudo systemctl status snap.certbot.renew.service

You can also do a dry run and test the renewal procedure, like this:

sudo certbot renew --dry-run

If there were no errors, you are all good. We have successfully configured NGINX and installed a valid SSL certificate. Now, since most Laravel apps are using a Task Scheduler to schedule automatic execution of Artisan commands, in the next chapter we are going to see how we can add a cron entry that will run the scheduler every minute. Also, we are going to install and configure a Supervisor, which will be responsible for running our Queues.

Configuring the Scheduler and the Supervisor

In order to set up the Scheduler, we just need to add one cron entry on the server that will run the schedule:run command every minute. We can achieve that using this command (if this is your first time adding a cron entry, just press 1 to use nano as the default editor, when prompted, or any other editor you might prefer):

crontab -e

Under all of the comments in that file, just paste this line:

* * * * * cd /var/www/laravel && php artisan schedule:run >> /dev/null 2>&1

Save and exit the file. Restart the service using this command:

sudo systemctl restart cron

Later on, we can check the status of the service like this:

sudo systemctl status cron

That's it regarding cron and running the Task Scheduler.

Next, we are going to install and configure Supervisor. Laravel jobs are typically processed by running the queue:work Artisan command on the server. But the command may fail for a variety of reasons. That is why we need a Supervisor to monitor the queue:work process and restart it if something unexpected happens.

We can install Supervisor by using this command:

sudo apt install supervisor

Check the status of the service by running:

sudo systemctl status supervisor

Now, we need to create a configuration file that will instruct the Supervisor how our processes should be monitored. Use this command to create a new file in the appropriate location:

sudo nano /etc/supervisor/conf.d/laravel-worker.conf

Here is an example that you can paste in that file:

[program:laravel-worker]

process_name=%(program_name)s_%(process_num)02d

command=php /var/www/laravel/artisan queue:work --sleep=3 --tries=3 --max-time=3600

autostart=true

autorestart=true

stopasgroup=true

killasgroup=true

user=www-data

numprocs=1

redirect_stderr=true

stdout_logfile=/var/www/laravel/queue-work.log

stopwaitsecs=3600

Something to note here is that we are running a single queue:work process, which is specified with the numprocs directive. You may increase this number if you need to handle a larger load of jobs, and the Supervisor will monitor each of those processes.

As mentioned in the docs, you should ensure that the value of stopwaitsecs is greater than the number of seconds consumed by your longest running job. Otherwise, the Supervisor may kill the job before it has finished processing. We have also added a path to the queue-work.log file that we can check if anything goes wrong.

For the changes to take effect, we need to reread, update, and start the workers by running these 3 commands (one by one, row by row):

sudo supervisorctl reread

sudo supervisorctl update

sudo supervisorctl start "laravel-worker:*"

If we check the status using the supervisorctl command, we should see that our process is running:

sudo supervisorctl status

If we want, we can also check the log file we defined by running this command:

sudo tail /var/www/laravel/queue-work.log

To find out more about the queue:work command, Supervisor, and creating your own custom commands, you can check out one of my other articles here: Running custom Artisan commands with Supervisor.

Creating a deployment script

What's left is to create a simple shell script with which we can run from our local computer to deploy changes to the server. First, create a deployment folder inside the root folder of your local Laravel installation. Then create two files inside of it: deploy.sh and procedure.sh. Add this to the deploy.sh file:

#!/bin/bash

printf "\nInitiating deploy procedure:\n"

ssh [email protected] bash -s < procedure.sh

printf "\nDeploy completed.\n"

In this file, we are connecting to our server using SSH as we usually would. Additionally, we are passing the output of the procedure script into that SSH session, so we could preview it in our local terminal. The procedure script will contain a group of the commands we will run on each deployment. Add this to the procedure.sh file:

# Changing the current directory

cd /var/www/laravel

# Putting the app in maintenance mode

php artisan down

# Fetching latest changes from GitHub

git fetch -a

git pull

# Installing Composer

composer install --no-interaction --prefer-dist --optimize-autoloader --no-dev

# Restarting PHP-FPM

sudo service php8.3-fpm restart

# Running Laravel specific commands

php artisan optimize:clear

php artisan config:cache

php artisan route:cache

php artisan migrate --force

php artisan queue:restart

# Bringing the app back from maintenance mode

php artisan up

As you can see by the comments in that file, we are first going into our app's directory, where we are putting the app in maintenance mode, fetching the code updates from GitHub, installing Composer dependencies, restarting PHP-FPM, running a few Artisan commands (to clear the cache, run the migrations, and restart the queue), and finally bringing the app back from maintenance mode. To run the script, position yourself in the deployment folder (cd deployment) in your local terminal and just run:

sh deploy.sh

When creating a script, make sure that it uses Unix line separators (LF) and that you are running the script from a terminal that can connect to the server using SSH, like Git Bash.

Here is what everything might look like when you are done:

This is, of course, a simple deploy script that can serve as the basis for any other commands you might like to add to your deploy procedure. Feel free to make any changes to the procedure.sh file as you see fit. Keep in mind that using this script, you will experience about 15-30 seconds of downtime, depending on how many procedures you add to the script and how long they take to execute.

There are ways to achieve zero downtime using symlinks, and there are also tools, packages (Deployer, Envoy), and services (Envoyer, Launchdeck), which can make that easier for you or completely automate it. I won't address that topic in this article, but if you are, for example, running an app that provides services and has paying customers, I suppose you will want to look into those ways to achieve zero downtime; otherwise, those simple shell scripts we created above should probably suffice. Again, it all depends on your current needs.

Optimizations

Before we get to the very end, there is just one thing I would like to mention. In this example, we were using a server with quite limited resources (1 CPU core, 1 GB of RAM), and we were running all of the services needed on that single server (mainly NGINX, PHP-FPM, and MySQL). While that should be enough for small and maybe some medium-sized apps (depending on the setup and optimizations), as your load increases, you might consider servers with higher resources or even using a separate server for MySQL.

If we take into account that in this article we used a server that has 1GB of RAM, MySQL 8 can take quite a bit of that memory. The docs state that "the default configuration is designed to permit a MySQL server to start on a virtual machine that has approximately 512MB of RAM." That would mean that MySQL could take half of the available RAM we have on our example server or even more.

Beyond that, MySQL has a feature called the Performance Schema that is used for monitoring MySQL server execution at a low level. Another part from the docs nicely explains how the feature is allocating memory: "The Performance Schema dynamically allocates memory incrementally, scaling its memory use to actual server load, instead of allocating required memory during server startup. Once memory is allocated, it is not freed until the server is restarted." So it is important to track the memory usage on the server, as it can gradually increase.

While there are ways to optimize MySQL and Performance Schema to consume less memory, the simplest way would be to disable Performance Schema altogether on servers with limited memory, like ours. Doing that can significantly decrease the overall MySQL memory usage. There are, of course, some trade-offs if you choose to do that, in terms of the fact that you may not be able to diagnose performance problems or resource usage as easily. There are quite a few discussions on whether that feature should be turned off, but what I generally think is that the chances are that you probably won't be using that feature too much, and it makes sense to disable it on servers with limited resources. Especially when you have the option to turn it on again when you please (with the downside of not having historical data for the period it was turned off). Of course, use cases can vary, so again, it's up to you to decide how you would like to proceed regarding this option.

If you do decide that you want to disable the Performance Schema, here is how you can do it. First, edit the mysqld.cnf file like this:

sudo nano /etc/mysql/my.cnf

Add these values at the end of the file:

[mysqld]

performance_schema = 0

Now we just need to restart MySQL for the changes to take effect:

sudo service mysql restart

That's it. You can check if the Performance Schema was turned off by logging into MySQL:

sudo mysql

and running this query:

SHOW VARIABLES LIKE 'performance_schema';

If the value is set to OFF, you are good to go. Just to note, in my case, the overall server memory usage decreased by about 250 MB when I disabled this feature.

One other thing you can do (which we won't cover here in detail) to ensure you don't run out of memory when having limited resources is to add some reserved swap space on your server. That disk swap space can be used when you run out of RAM, preventing the OOM (out-of-memory) Killer from shutting down your services. Usually 1 GB of such space should be enough, but feel free to adjust that to your needs. There are some discussions regarding hard and solid-state drives and when and if you should add swap space to an SSD storage device, so make sure to check those if you decide to proceed in that direction.

Conclusion

Congratulations! You made it to the end! This was my longest article so far, and I've tried to include everything you might need to host a fully functional Laravel app on the LEMP stack. Along the way, there were some things I've just mentioned but didn't explain in detail; otherwise, the article would have been a lot longer. Regarding those topics, I've tried to at least point you in the right direction. So, as with almost everything, there are more areas to explore on your own. In any case, I think we covered the most important parts and problems you might encounter when trying to set up your own server using the LEMP stack.

Until next time, take care.